Bayesian synthetic control workflow#

The synthetic control method is one of the most influential innovations in causal inference for policy evaluation [Athey and Imbens, 2017]. This notebook demonstrates the full workflow — not just model fitting, but the recommended pipeline of feasibility assessment, estimation, and validation recommended by Abadie [2021], augmented with Bayesian design tools from the geo-experiment literature [Meta Incubator, 2022, Brodersen et al., 2015].

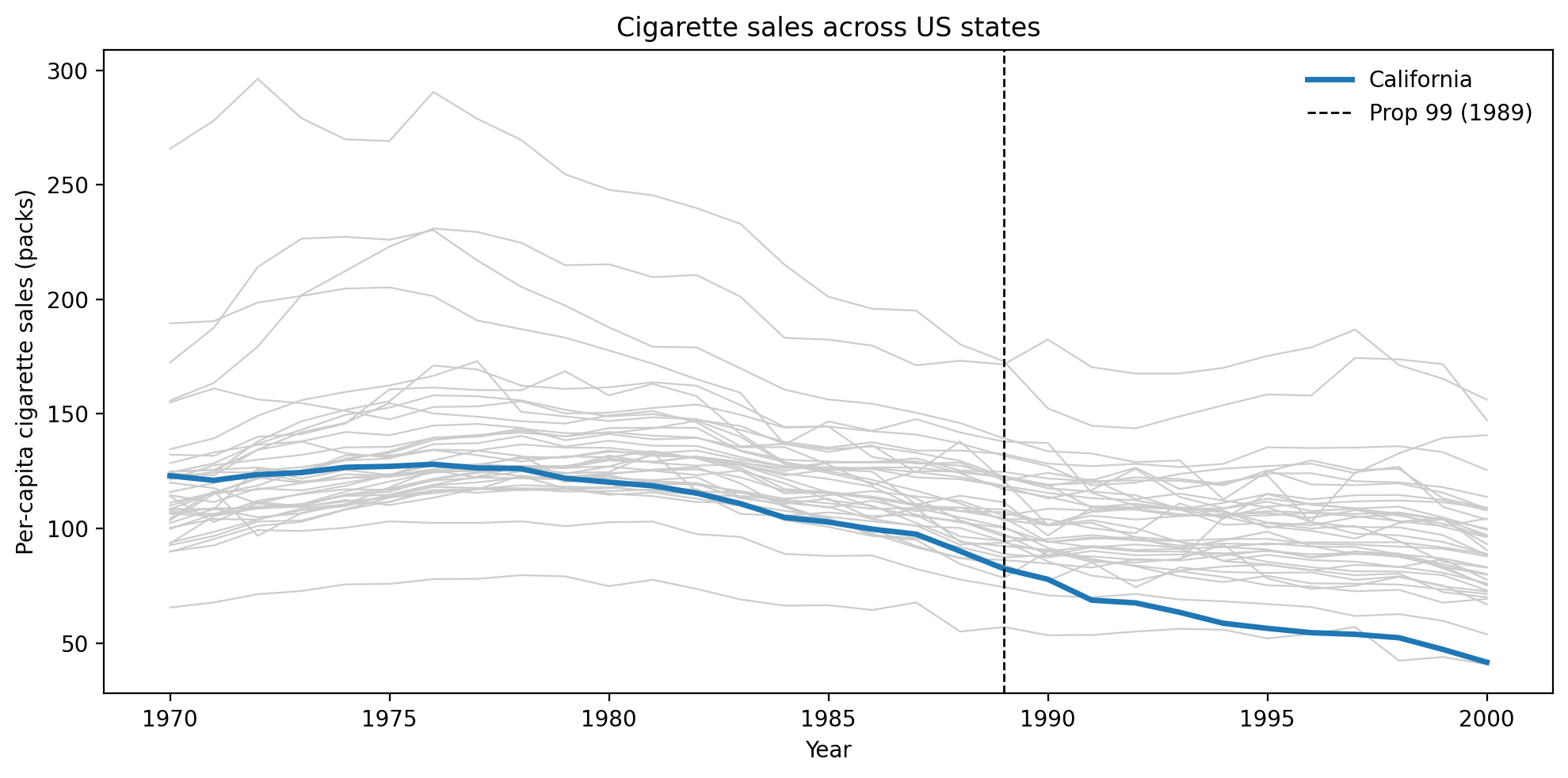

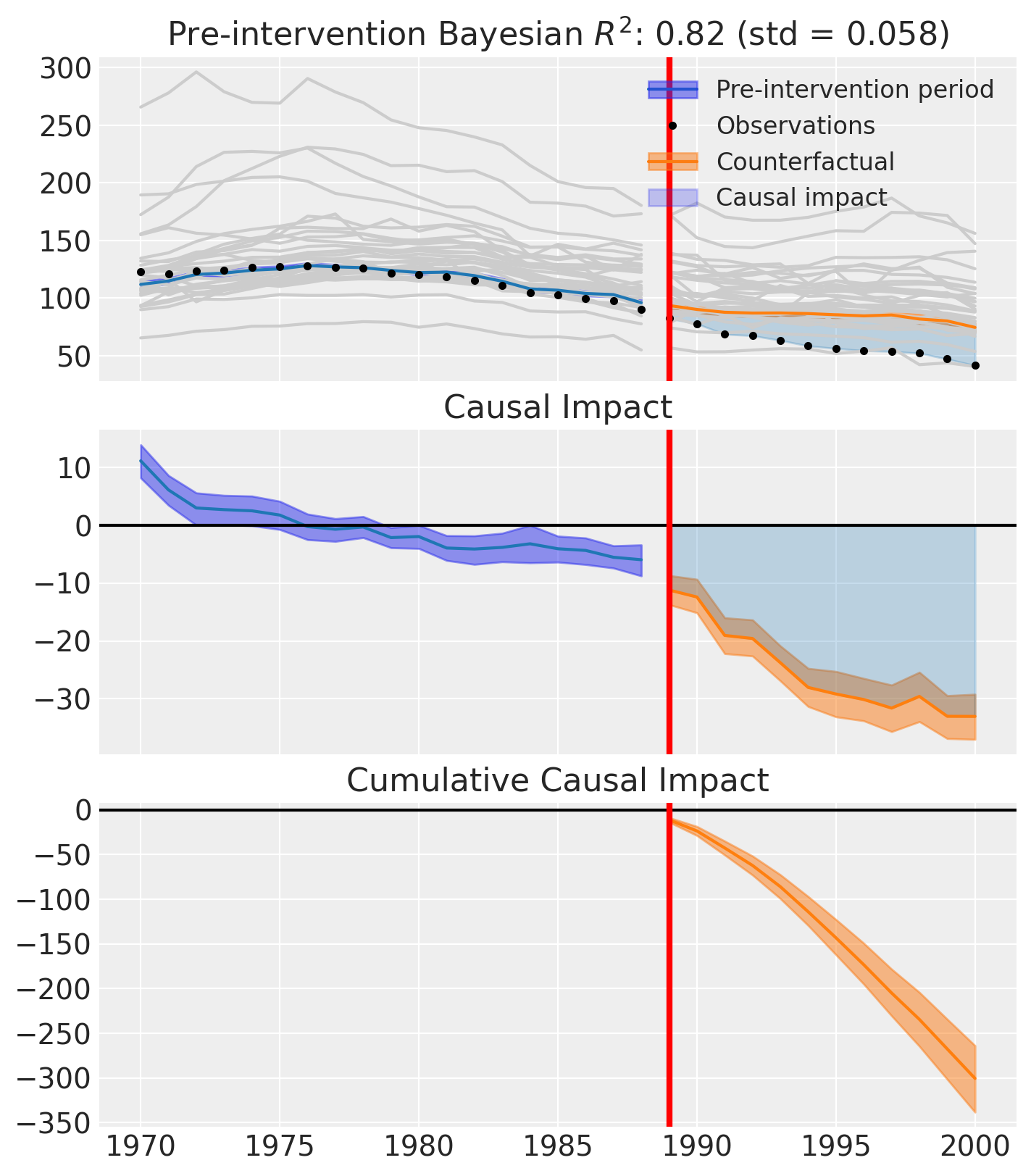

We use the California Proposition 99 dataset — the canonical example from the SC literature. In 1988, California passed Proposition 99, which imposed a 25-cent tax on cigarette packs. Abadie et al. [2010] estimated the causal effect of this policy on per-capita cigarette sales using the remaining 38 US states as a donor pool. This example is ideal for demonstrating the workflow because the treatment effect is large, sustained, and well-documented.

Before the experiment

Donor pool selection. Inspect pairwise correlations between the treated unit and candidate controls in the pre-period. Donors with weak or negative correlations should be excluded, because they force the model to extrapolate rather than interpolate, undermining the counterfactual [Abadie et al., 2010, Abadie, 2021].

Convex hull condition. Verify that the treated unit’s pre-intervention trajectory falls within the range spanned by the control units. If it does not, the weighted combination cannot reconstruct the treated series accurately and the method’s core assumption is violated [Abadie, 2021].

Donor pool quality scoring. Quantify correlation strength, convex hull coverage, and weight concentration in a single diagnostic report. This translates the visual checks above into a scored summary that flags potential problems before any experiment is run.

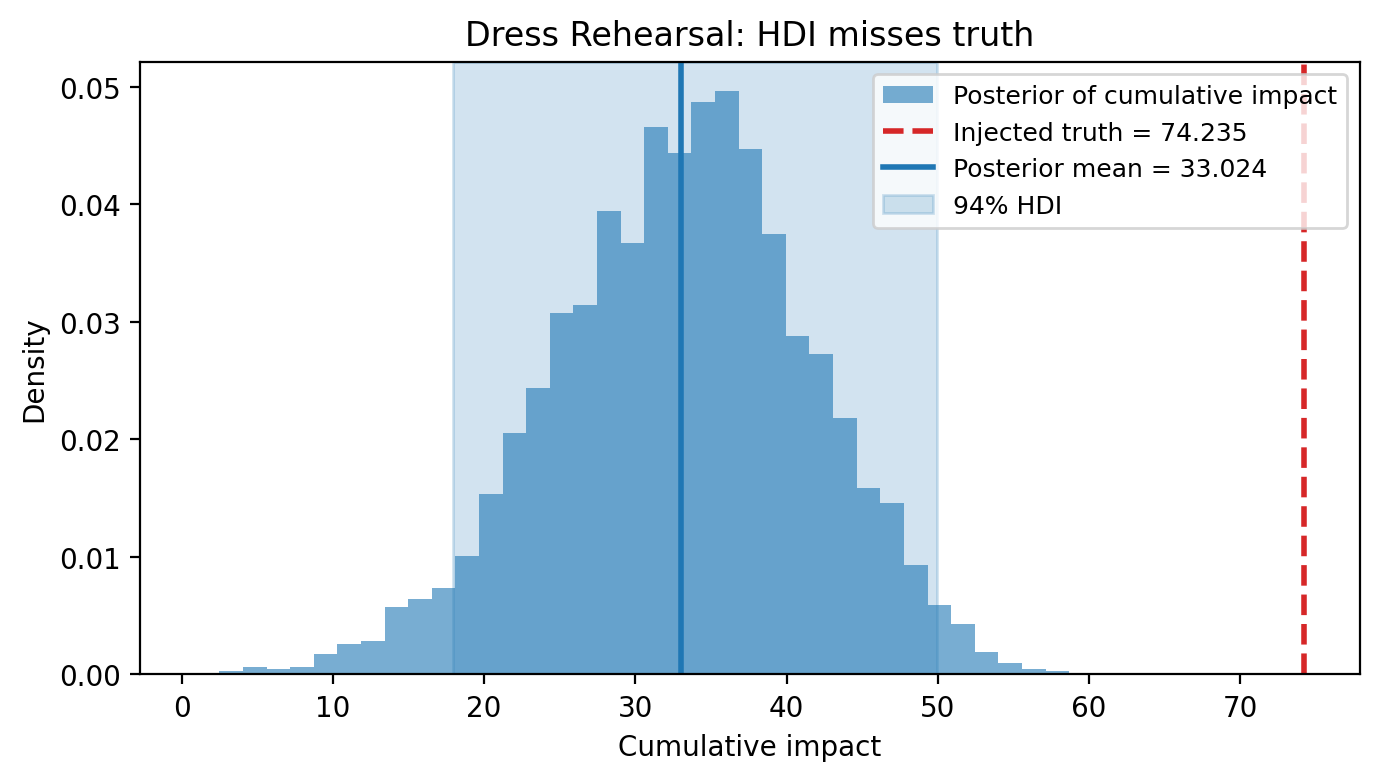

Dress rehearsal. Split the pre-period, inject a known synthetic effect into the held-out window, and check whether the model recovers it. A passing dress rehearsal confirms the design can detect and correctly estimate an effect of the anticipated magnitude — extending the placebo-in-time validation concept from Abadie et al. [2010].

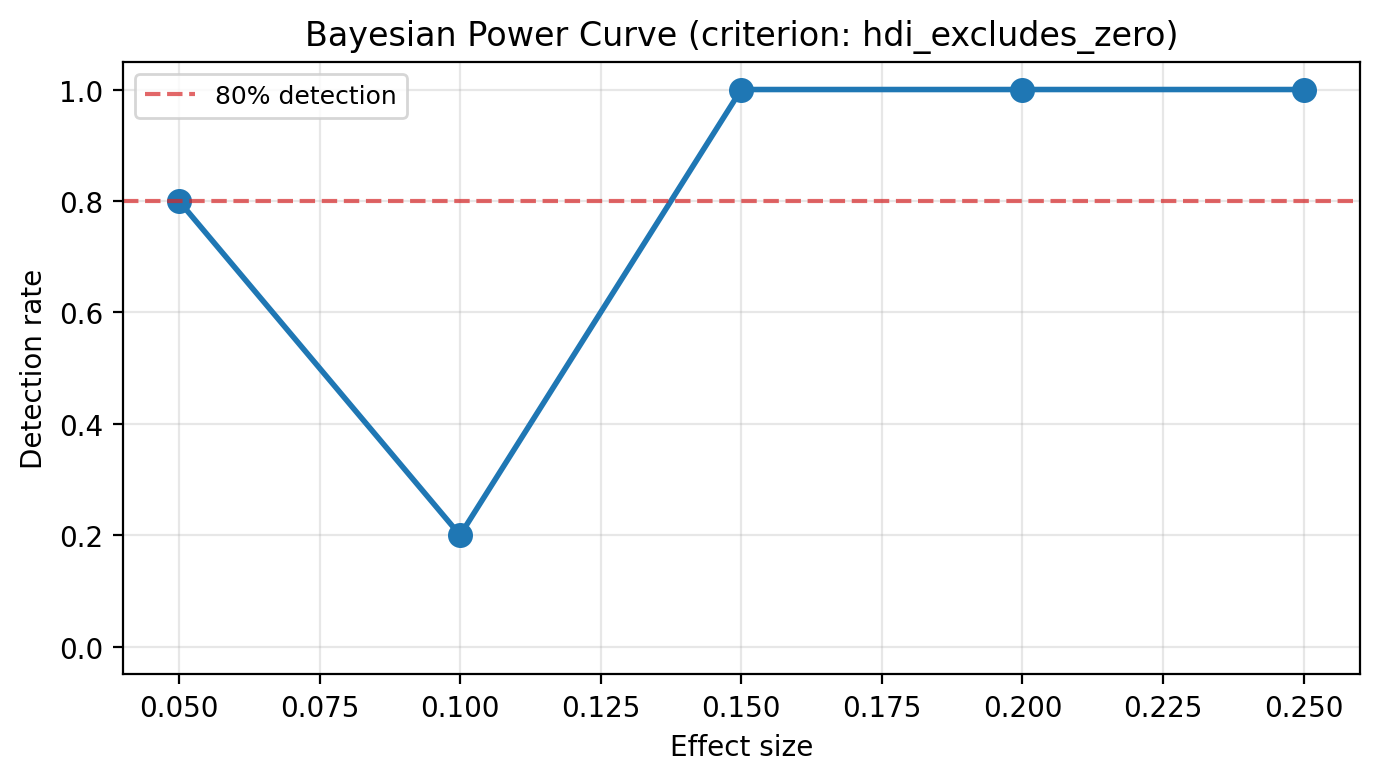

Power analysis. Repeat the dress rehearsal across a range of effect sizes to build a Bayesian power curve. This identifies the minimum detectable effect — the smallest treatment effect the design can reliably distinguish from noise — and determines whether the experiment is worth running [Meta Incubator, 2022].

After the experiment

Model fitting. Fit the synthetic control on the full dataset, including both pre- and post-intervention observations. The model learns donor weights from the pre-period and projects the counterfactual into the post-period [Abadie et al., 2010, Abadie and Gardeazabal, 2003].

Causal impact estimation. Compare observed outcomes to the synthetic counterfactual to estimate the average and cumulative causal effect of the intervention. Posterior intervals quantify uncertainty around these estimates [Brodersen et al., 2015].

Effect summary reporting. Produce a decision-ready summary with point estimates, HDI intervals, tail probabilities, and relative effects — the information a stakeholder needs to act on the result.

After the experiment: robustness and sensitivity

Estimation alone is not enough — the SC literature strongly recommends a battery of post-estimation checks to validate the causal claims [Abadie et al., 2010, Athey and Imbens, 2017, Abadie et al., 2015].

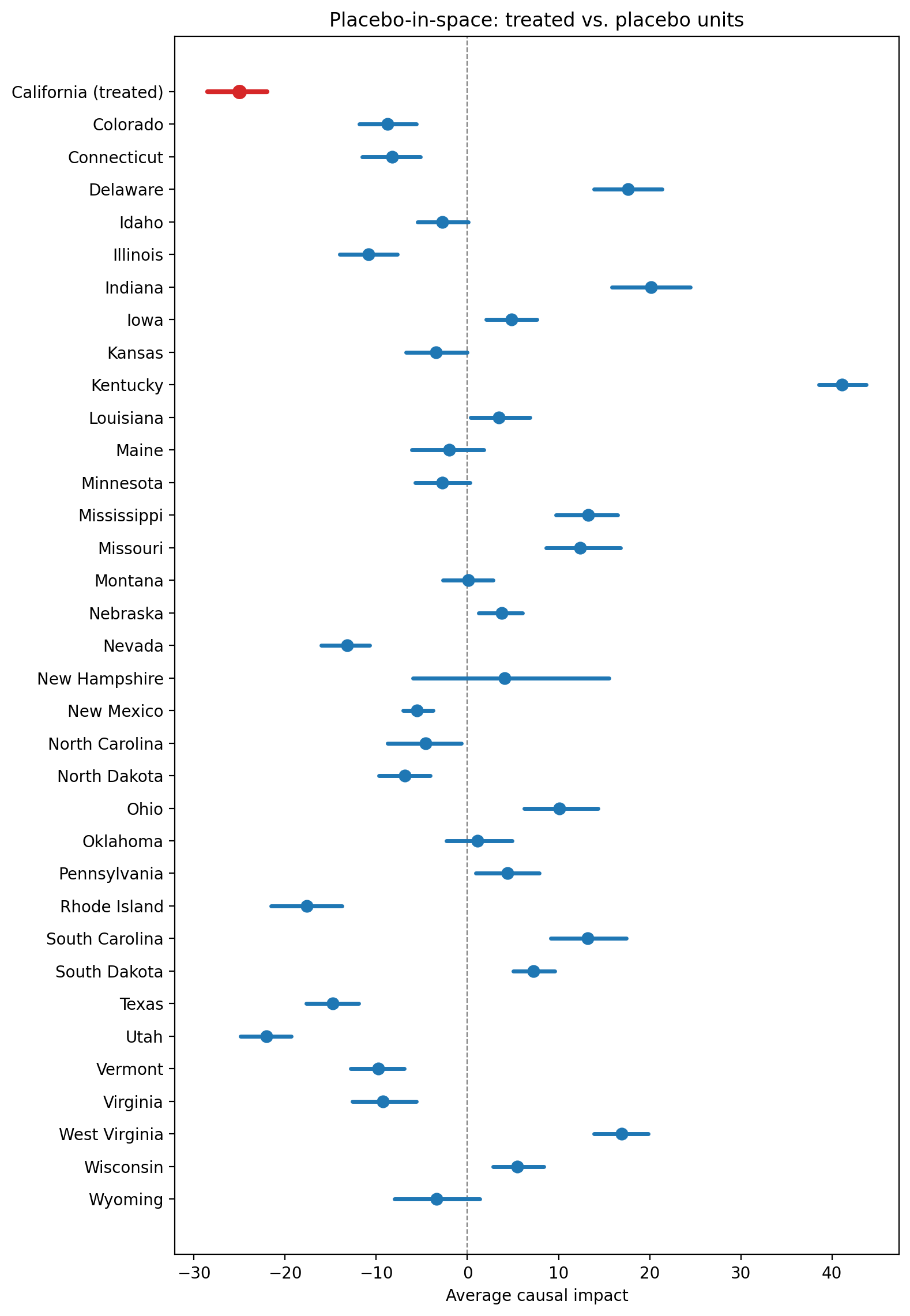

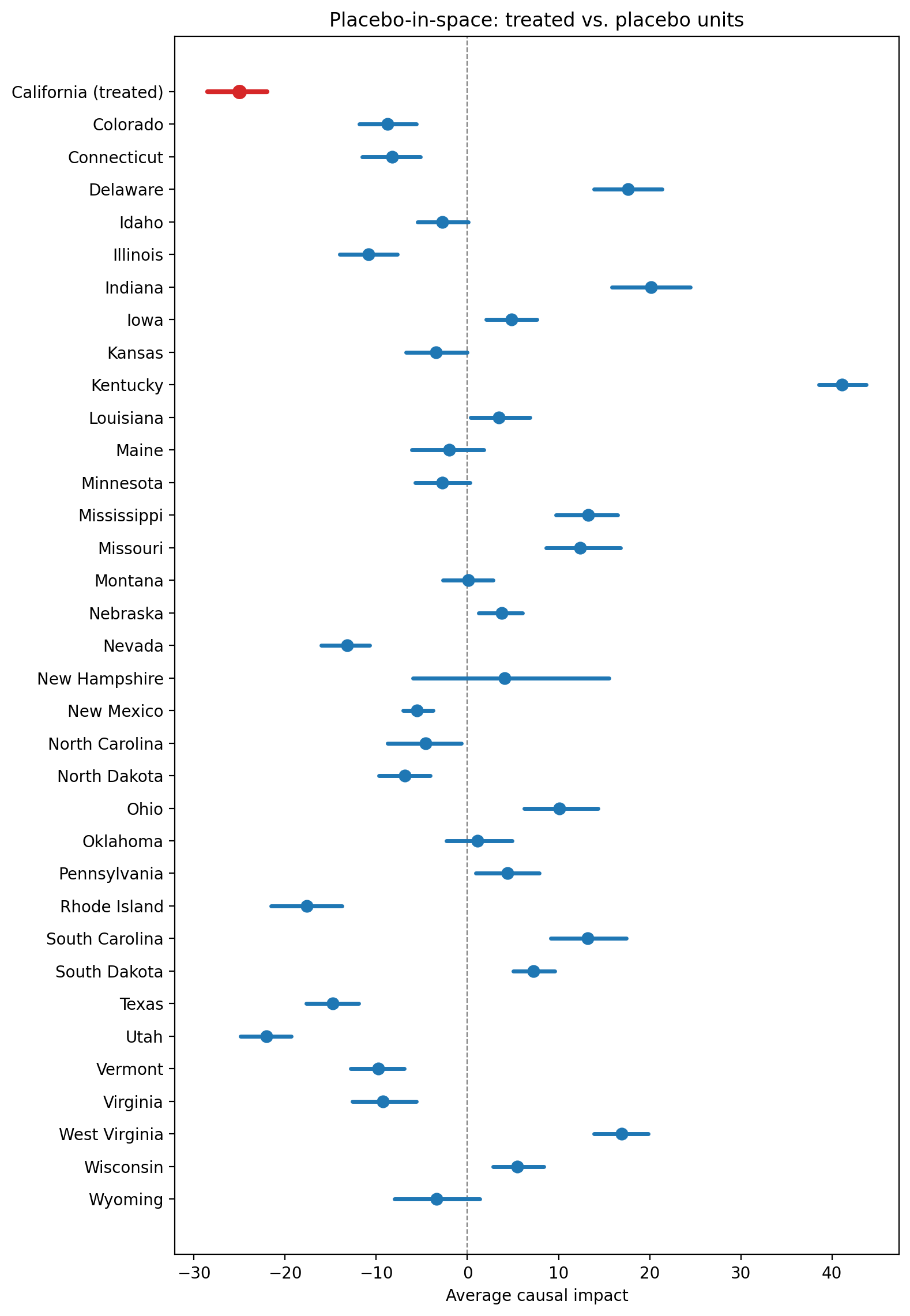

Placebo-in-space. Reassign treatment to each control unit in turn and compare placebo effects to the actual estimate. If placebos produce effects as large as the real one, the causal claim is weakened.

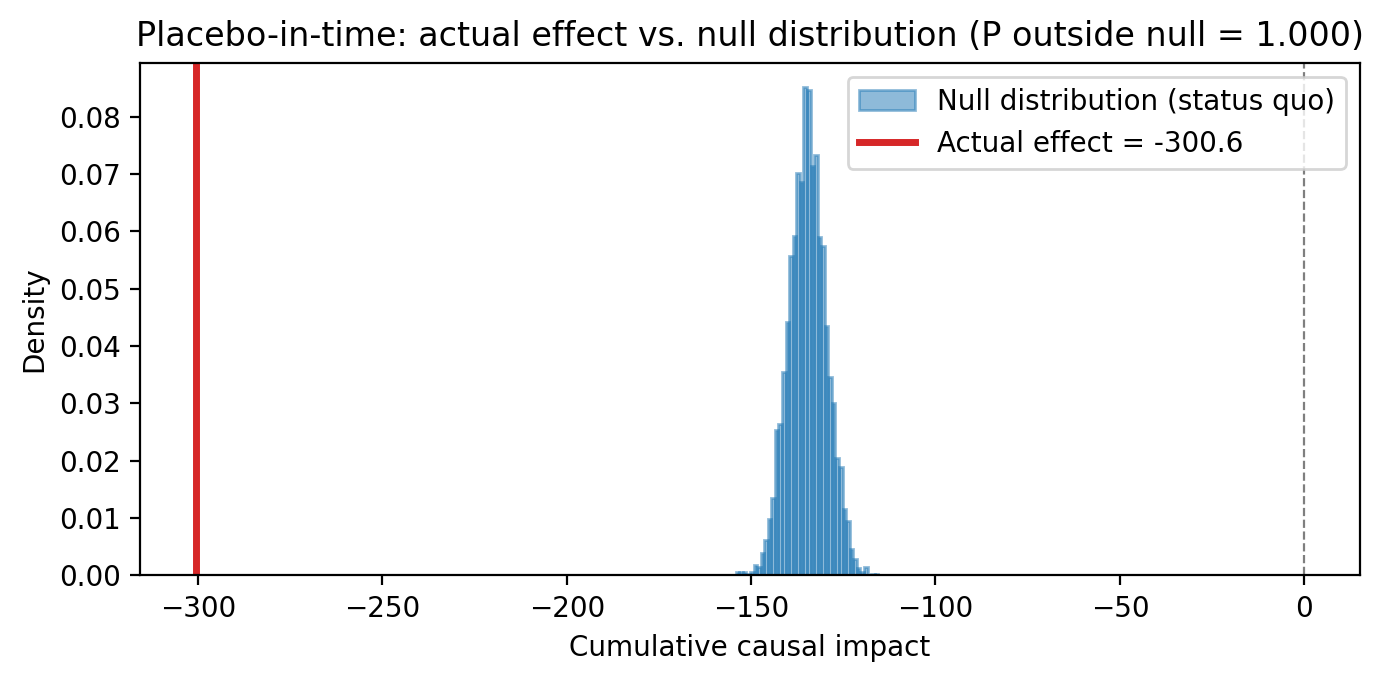

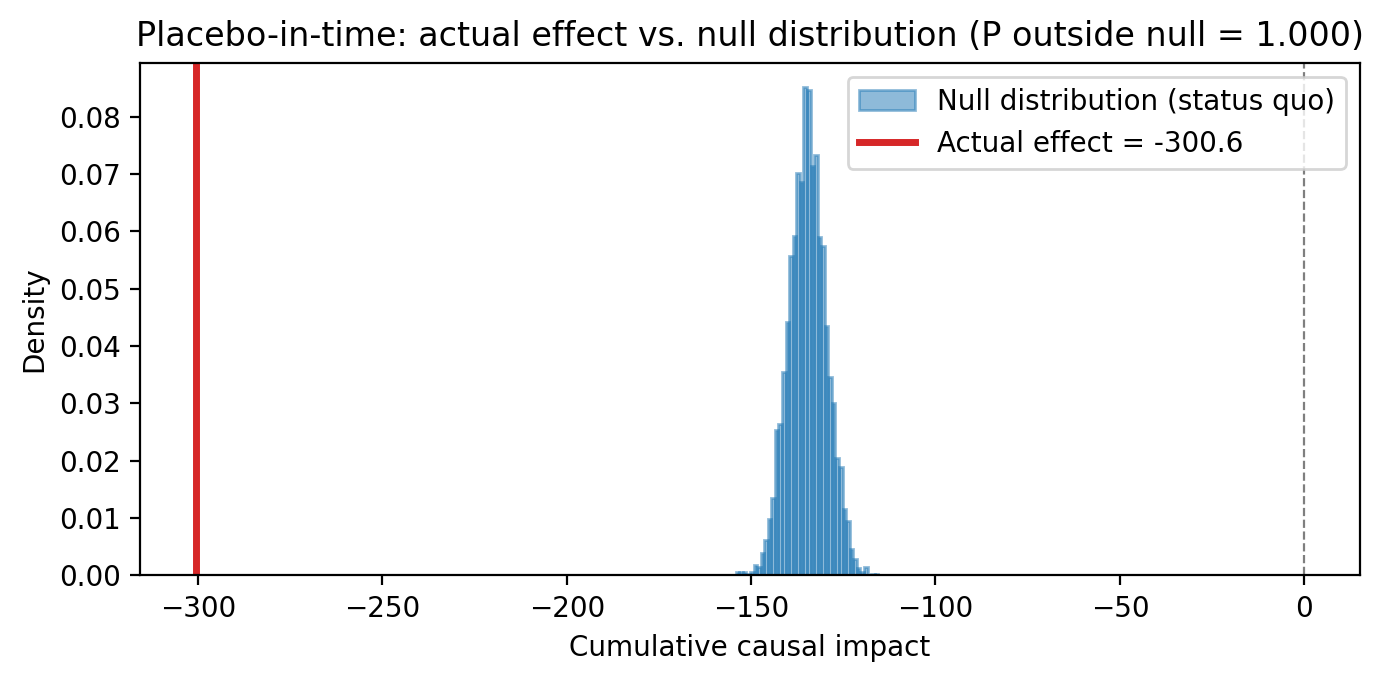

Placebo-in-time. Reassign the intervention to a date in the pre-period. A well-specified model should show no effect where none occurred.

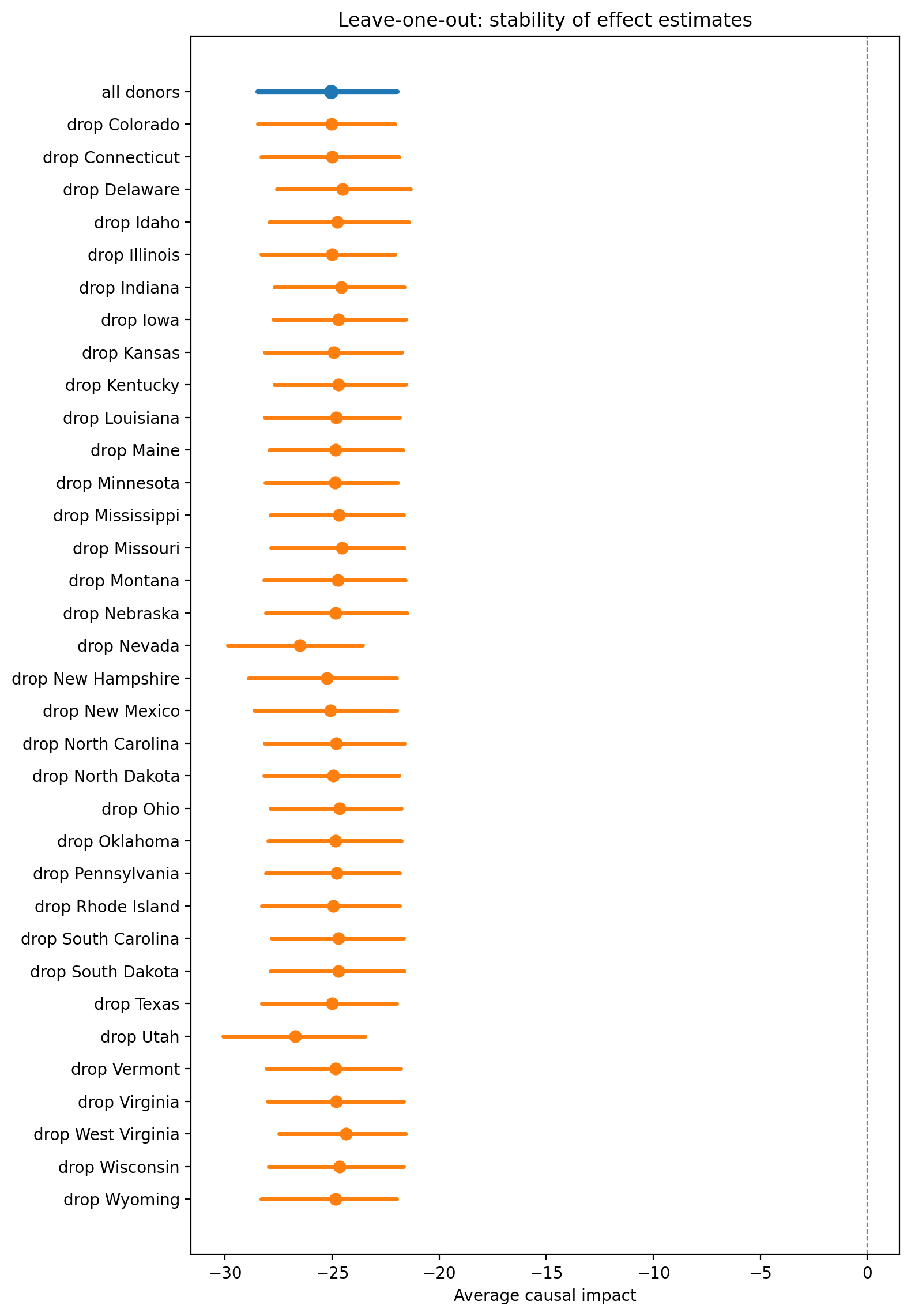

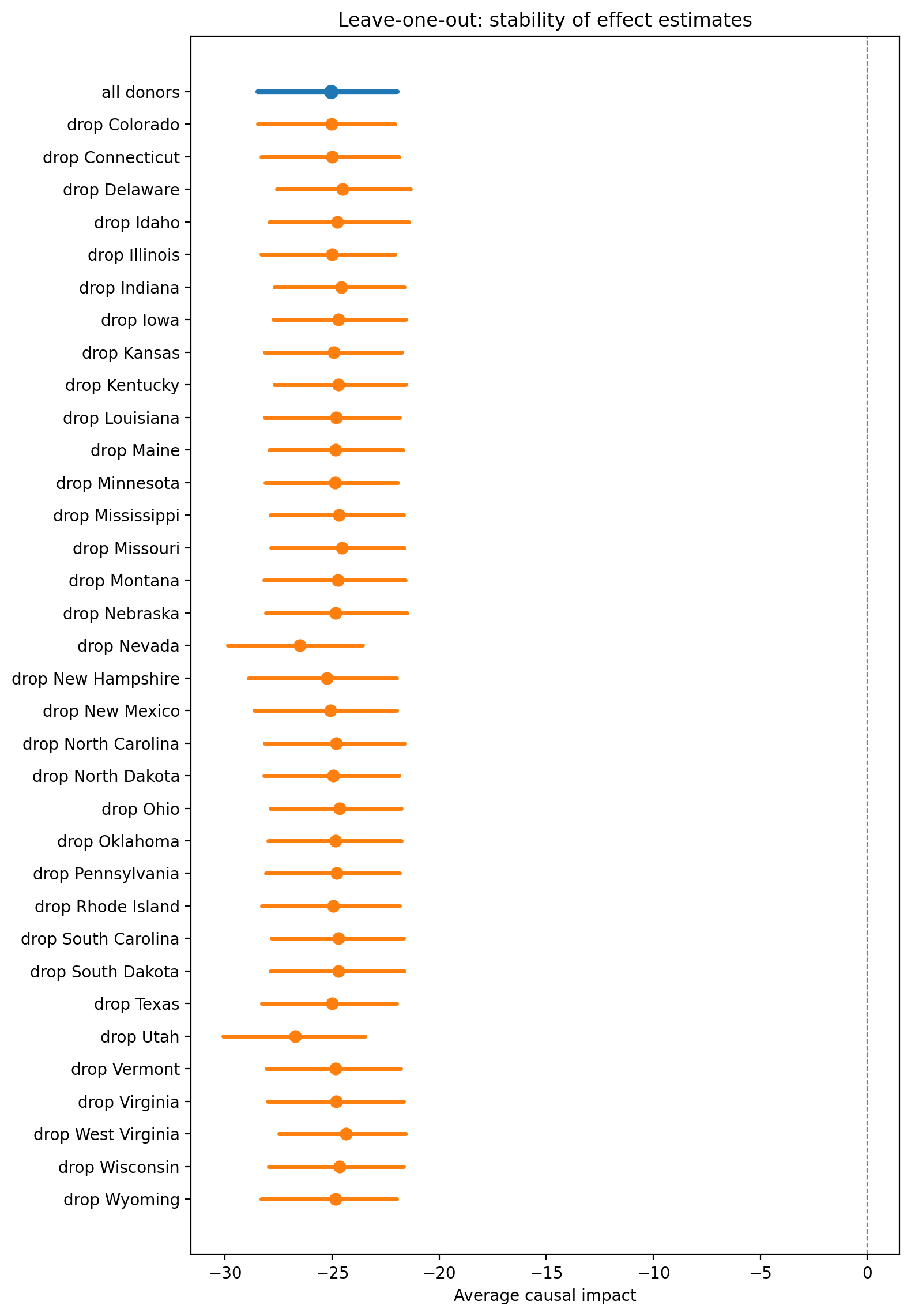

Leave-one-out robustness. Remove each donor unit in turn and re-estimate. Stable results across these perturbations indicate the estimate is not driven by a single unit.

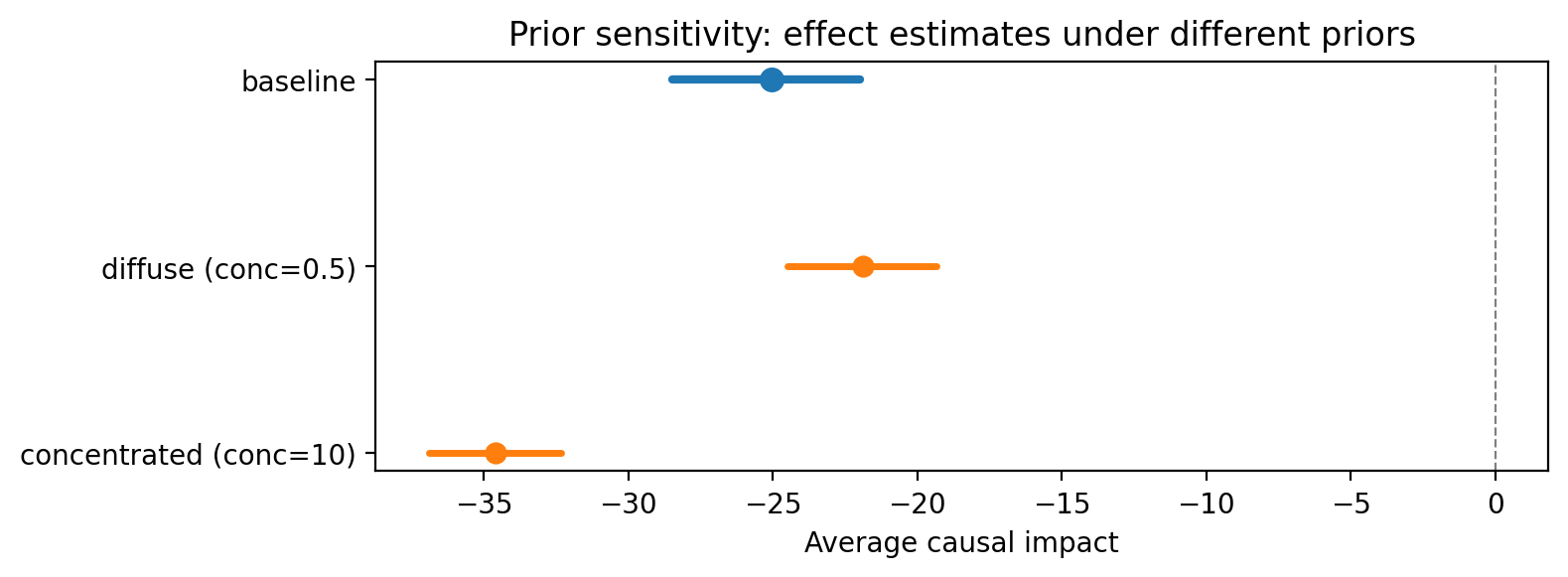

Prior sensitivity. For Bayesian SC, vary the prior specification and check that posterior effect estimates are not dominated by the prior.

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import causalpy as cp

%load_ext autoreload

%autoreload 2

%config InlineBackend.figure_format = 'retina'

seed = 42

The autoreload extension is already loaded. To reload it, use:

%reload_ext autoreload

Load data#

We load the California Proposition 99 dataset — per-capita cigarette pack sales across 39 US states from 1970 to 2000. California (the treated unit) enacted Proposition 99 in 1988, with the tax taking effect in 1989. The 38 remaining states serve as the donor pool.

df = cp.load_data("prop99").set_index("Year")

treatment_time = 1989

treated_unit = "California"

control_units = [c for c in df.columns if c != treated_unit]

df.head()

| Alabama | Arkansas | California | Colorado | Connecticut | Delaware | Georgia | Idaho | Illinois | Indiana | ... | South Carolina | South Dakota | Tennessee | Texas | Utah | Vermont | Virginia | West Virginia | Wisconsin | Wyoming | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Year | |||||||||||||||||||||

| 1970 | 89.8 | 100.3 | 123.0 | 124.8 | 120.0 | 155.0 | 109.9 | 102.4 | 124.8 | 134.6 | ... | 103.6 | 92.7 | 99.8 | 106.4 | 65.5 | 122.6 | 124.3 | 114.5 | 106.4 | 132.2 |

| 1971 | 95.4 | 104.1 | 121.0 | 125.5 | 117.6 | 161.1 | 115.7 | 108.5 | 125.6 | 139.3 | ... | 115.0 | 96.7 | 106.3 | 108.9 | 67.7 | 124.4 | 128.4 | 111.5 | 105.4 | 131.7 |

| 1972 | 101.1 | 103.9 | 123.5 | 134.3 | 110.8 | 156.3 | 117.0 | 126.1 | 126.6 | 149.2 | ... | 118.7 | 103.0 | 111.5 | 108.6 | 71.3 | 138.0 | 137.0 | 117.5 | 108.8 | 140.0 |

| 1973 | 102.9 | 108.0 | 124.4 | 137.9 | 109.3 | 154.7 | 119.8 | 121.8 | 124.4 | 156.0 | ... | 125.5 | 103.5 | 109.7 | 110.4 | 72.7 | 146.8 | 143.1 | 116.6 | 109.5 | 141.2 |

| 1974 | 108.2 | 109.7 | 126.7 | 132.8 | 112.4 | 151.3 | 123.7 | 125.6 | 131.9 | 159.6 | ... | 129.7 | 108.4 | 114.8 | 114.7 | 75.6 | 151.8 | 149.6 | 119.9 | 111.8 | 145.8 |

5 rows × 39 columns

fig, ax = plt.subplots(figsize=(10, 5))

for state in control_units:

ax.plot(df.index, df[state], color="0.8", linewidth=0.8)

ax.plot(df.index, df[treated_unit], color="C0", linewidth=2.5, label=treated_unit)

ax.axvline(

treatment_time, color="k", linestyle="--", linewidth=1, label="Prop 99 (1989)"

)

ax.set(

xlabel="Year",

ylabel="Per-capita cigarette sales (packs)",

title="Cigarette sales across US states",

)

ax.legend(frameon=False)

fig.tight_layout()

All states share a broad downward trend in cigarette consumption from the 1970s onward, reflecting national public-health campaigns and changing social norms. California (blue) tracks the middle of the donor pack in the pre-period, confirming that a synthetic control constructed from these states is plausible. After Proposition 99 takes effect in 1989, California’s trajectory drops noticeably below the donor band — the gap between the blue line and the grey bundle is the visual signature of the treatment effect we aim to estimate.

Before the experiment: assessing the design#

Will this experiment detect the effect we care about? Before committing budget, we should assess whether the synthetic control design can plausibly recover an effect from the available donor pool. Everything in this section uses only pre-period data — no post-period observations are needed.

We begin with visual checks — inspecting donor correlations and the convex hull condition — then move to quantitative tools: donor pool quality scoring, a dress rehearsal, and a power analysis. For the quantitative tools we fit the SC model on historical data alone using SyntheticControl.from_pre_period().

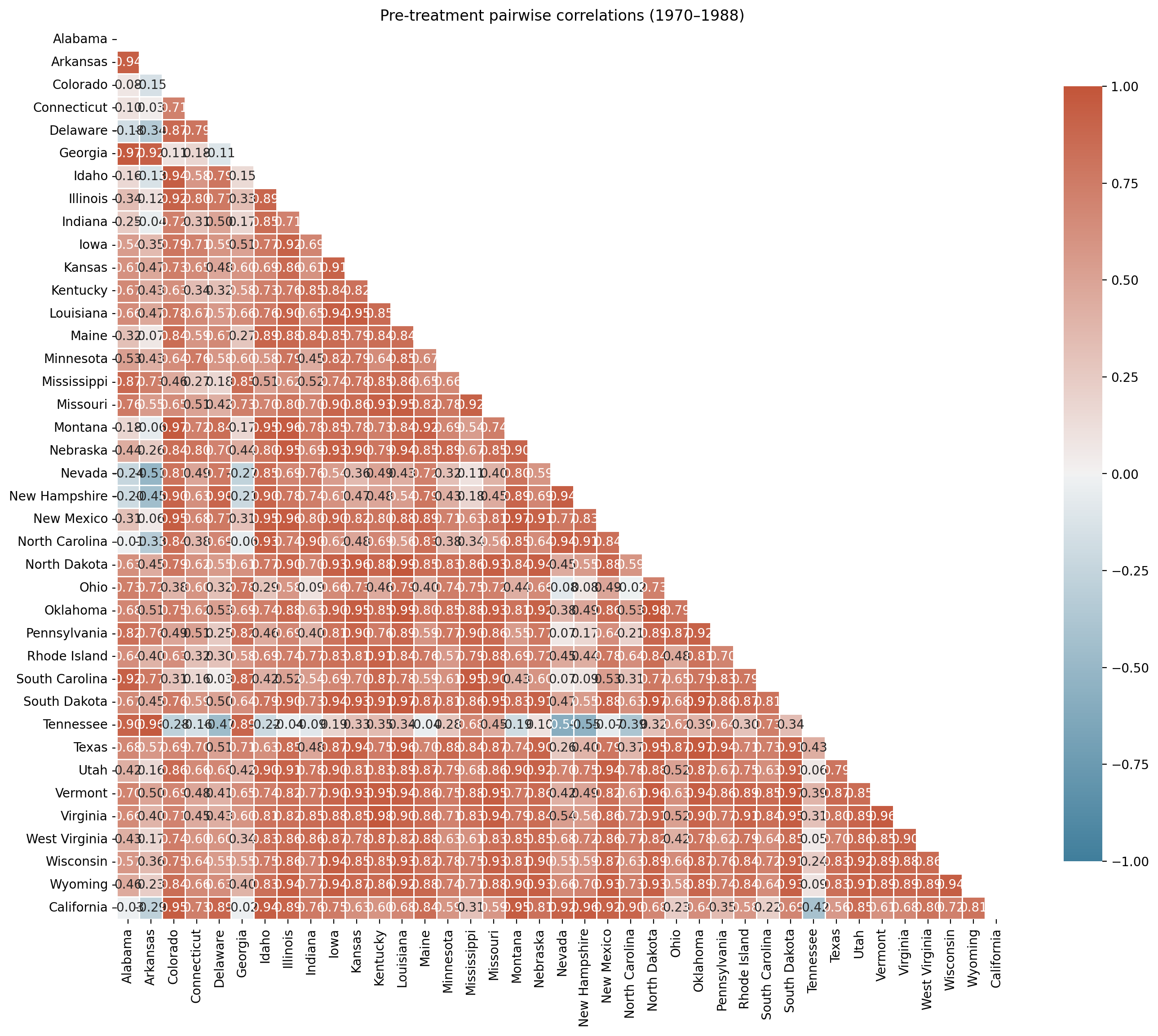

Donor pool selection#

Before fitting, it is good practice to inspect pairwise correlations among units in the pre-treatment period. The donor pool should consist of units not affected by the intervention and driven by the same structural process as the treated unit [Abadie, 2021]. A poorly chosen pool forces the synthetic control to extrapolate rather than interpolate, introducing bias [Abadie et al., 2010]. Donor units that experienced similar shocks or policy changes during the study period should also be excluded [Abadie et al., 2015].

For the Proposition 99 analysis, Abadie et al. [2010] excluded states that enacted large tobacco tax increases or control programs during the study period. The dataset we use here already reflects their curated 38-state donor pool.

Tip

Beyond correlations, practitioners should verify that (a) no donor units were affected by similar interventions during the study window [Abadie et al., 2015] and (b) relevant pre-treatment covariates are also balanced, when available — careless donor pool composition is one of the most consequential choices in SC analysis, and pre-treatment outcome matching alone may be insufficient [Pickett et al., 2025].

pre = df.loc[:treatment_time]

corr, ax = cp.plot_correlations(

pre, columns=control_units + [treated_unit], figsize=(16, 14)

)

ax.set(title="Pre-treatment pairwise correlations (1970–1988)");

Most control states are positively correlated with California in the pre-period, reflecting shared national trends in declining cigarette consumption. However, a few states show negative or near-zero correlations — these units’ pre-treatment trajectories moved in the opposite direction to California’s and would force the model to assign them negative weight (which the Dirichlet prior prohibits) or effectively ignore them.

Including such units is not harmful in a strict sense — they will receive near-zero weight — but they add noise to the optimisation and can slow convergence. More importantly, Abadie [2021] recommends that the donor pool consist of units “driven by the same structural process” as the treated unit, and a negative pre-treatment correlation is evidence against that assumption.

We apply a correlation threshold of 0.0 to exclude states whose pre-treatment cigarette sales moved in the opposite direction to California’s. This is a conservative choice — it only removes clearly unsuitable donors rather than aggressively pruning the pool. A stricter threshold (e.g., 0.5) would retain only the best-matching states but risks excluding units that contribute useful information. The right threshold depends on domain knowledge and the number of donors available; with 38 candidates, we can afford to be selective without starving the model of donors.

from matplotlib.colors import Normalize

STATE_ABBREV = {

"Alabama": "AL",

"Arkansas": "AR",

"California": "CA",

"Colorado": "CO",

"Connecticut": "CT",

"Delaware": "DE",

"Georgia": "GA",

"Idaho": "ID",

"Illinois": "IL",

"Indiana": "IN",

"Iowa": "IA",

"Kansas": "KS",

"Kentucky": "KY",

"Louisiana": "LA",

"Maine": "ME",

"Minnesota": "MN",

"Mississippi": "MS",

"Missouri": "MO",

"Montana": "MT",

"Nebraska": "NE",

"Nevada": "NV",

"New Hampshire": "NH",

"New Mexico": "NM",

"North Carolina": "NC",

"North Dakota": "ND",

"Ohio": "OH",

"Oklahoma": "OK",

"Pennsylvania": "PA",

"Rhode Island": "RI",

"South Carolina": "SC_state",

"South Dakota": "SD",

"Tennessee": "TN",

"Texas": "TX",

"Utah": "UT",

"Vermont": "VT",

"Virginia": "VA",

"West Virginia": "WV",

"Wisconsin": "WI",

"Wyoming": "WY",

}

# Tile grid positions (col, row) approximating US geography.

GRID = {

"AK": (0, 0),

"ME": (10, 0),

"VT": (8, 1),

"NH": (9, 1),

"WA": (0, 2),

"ID": (1, 2),

"MT": (2, 2),

"ND": (3, 2),

"MN": (4, 2),

"WI": (5, 2),

"MI": (7, 2),

"NY": (8, 2),

"MA": (9, 2),

"RI": (10, 2),

"CT": (11, 2),

"OR": (0, 3),

"NV": (1, 3),

"WY": (2, 3),

"SD": (3, 3),

"IA": (4, 3),

"IL": (5, 3),

"IN": (6, 3),

"OH": (7, 3),

"PA": (8, 3),

"NJ": (9, 3),

"CA": (0, 4),

"UT": (1, 4),

"CO": (2, 4),

"NE": (3, 4),

"MO": (4, 4),

"KY": (5, 4),

"WV": (6, 4),

"VA": (7, 4),

"MD": (8, 4),

"DE": (9, 4),

"AZ": (1, 5),

"NM": (2, 5),

"KS": (3, 5),

"AR": (4, 5),

"TN": (5, 5),

"NC": (6, 5),

"SC": (7, 5),

"OK": (3, 6),

"LA": (4, 6),

"MS": (5, 6),

"AL": (6, 6),

"GA": (7, 6),

"HI": (0, 7),

"TX": (3, 7),

"FL": (8, 7),

}

calif_corr = corr[treated_unit].drop(treated_unit)

abbrev_corr = {

STATE_ABBREV[name]: val for name, val in calif_corr.items() if name in STATE_ABBREV

}

abbrev_corr = {("SC" if k == "SC_state" else k): v for k, v in abbrev_corr.items()}

r = 0.46

spacing = 1.0

cmap = plt.cm.RdYlGn

norm = Normalize(vmin=-0.2, vmax=1.0)

fig, ax = plt.subplots(figsize=(12, 7))

for abbr, (col, row) in GRID.items():

x = col * spacing

y = -row * spacing

if abbr == "CA":

color, ec = "C0", "C0"

label = "CA\n(treated)"

elif abbr in abbrev_corr:

color = cmap(norm(abbrev_corr[abbr]))

ec = "0.6"

label = f"{abbr}\n{abbrev_corr[abbr]:.2f}"

else:

color, ec = "0.93", "0.8"

label = abbr

circle = plt.Circle((x, y), r, facecolor=color, edgecolor=ec, linewidth=1.2)

ax.add_patch(circle)

ax.text(

x,

y,

label,

ha="center",

va="center",

fontsize=6,

fontweight="bold" if abbr == "CA" else "normal",

color="white" if abbr == "CA" else "0.15",

)

sm = plt.cm.ScalarMappable(cmap=cmap, norm=norm)

sm.set_array([])

cbar = fig.colorbar(sm, ax=ax, shrink=0.6, aspect=25, pad=0.02)

cbar.set_label("Correlation with California (pre-treatment)")

ax.set_aspect("equal")

ax.autoscale_view()

ax.axis("off")

ax.set_title(

"Pre-treatment correlation with California by state",

fontsize=14,

fontweight="bold",

pad=15,

)

fig.tight_layout()

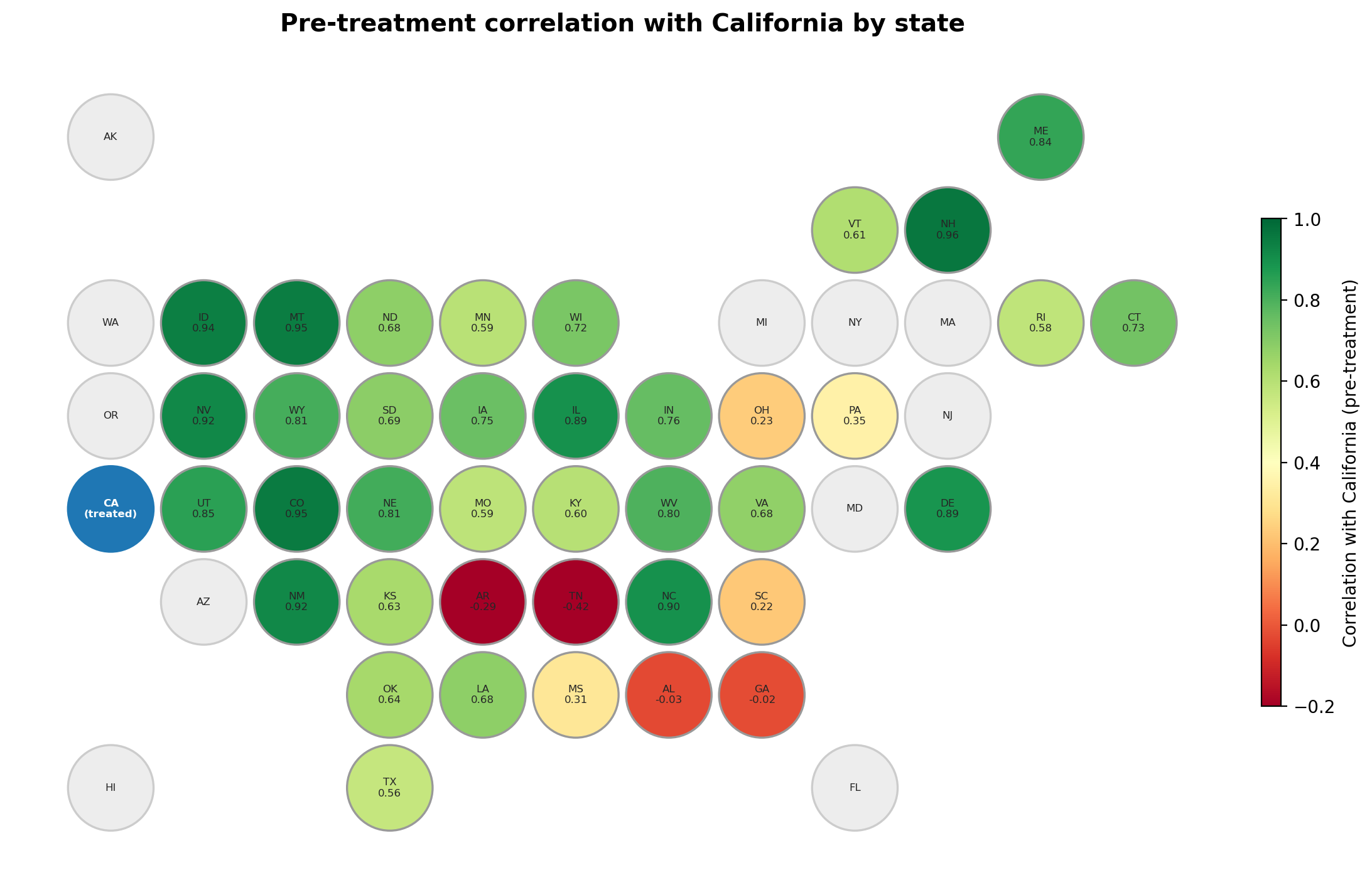

The hex map reveals a clear spatial pattern in donor quality. States in the West and Upper Midwest tend to show the highest correlations with California — they share similar secular trends in cigarette consumption. States in the South and Deep South are weaker donors, and a few show near-zero or negative correlations. Grey hexagons are states excluded from the original Abadie et al. [2010] donor pool (they enacted tobacco legislation during the study period). This spatial view complements the heatmap above by making it easy to spot regional clusters of strong and weak donors.

correlation_threshold = 0.0

calif_corr = corr[treated_unit].drop(treated_unit)

excluded = calif_corr[calif_corr < correlation_threshold].index.tolist()

control_units = [s for s in control_units if s not in excluded]

print(f"Correlation threshold: {correlation_threshold}")

print(f"Excluded {len(excluded)} states: {excluded}")

print(f"Remaining donor pool: {len(control_units)} states")

Correlation threshold: 0.0

Excluded 4 states: ['Alabama', 'Arkansas', 'Georgia', 'Tennessee']

Remaining donor pool: 34 states

The convex hull condition#

The synthetic control method uses non-negative weights that sum to one. This means the synthetic control is a convex combination of the control units — it can only produce values within the range spanned by the controls at each time point [Abadie, 2021]. The convex hull requirement is a direct consequence of the non-negativity and sum-to-one constraints on the weights [Doudchenko and Imbens, 2016].

Note

For the method to work well, the treated unit’s pre-intervention trajectory should lie within the “envelope” of the control units. If all controls are consistently above or below the treated unit, the method cannot construct an accurate counterfactual. Abadie [2021] discusses this condition at length in §4.3.

CausalPy automatically checks this assumption when you create a SyntheticControl object and will issue a warning if violated. The donor_pool_quality() tool in the next section quantifies this condition automatically alongside other diagnostics. See the Convex hull condition glossary entry and Abadie et al. [2010] for more details. The Augmented Synthetic Control Method [Ben-Michael et al., 2021] can handle cases where this assumption is violated.

Create a SyntheticControl design object from the pre-period#

We create a design object using only the pre-intervention data (1970–1988). This object provides the design assessment methods — donor pool quality scoring, dress rehearsal, and power analysis — without requiring post-intervention data.

pre_data = df[df.index < treatment_time]

design = cp.SyntheticControl.from_pre_period(

pre_data,

control_units=control_units,

treated_units=[treated_unit],

model=cp.pymc_models.WeightedSumFitter(

sample_kwargs={

"target_accept": 0.95,

"random_seed": seed,

}

),

)

Show code cell output

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 6 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Is the donor pool adequate?#

Abadie [2021] recommends a structured feasibility assessment before applying the synthetic control method. donor_pool_quality() implements this assessment by aggregating three diagnostics into a scored summary: mean donor correlation, convex hull condition coverage (what fraction of pre-period time points fall within the control envelope), and weight concentration (effective number of donors via the Dirichlet weights). The weight concentration metric is motivated by the observation that SC solutions tend to be sparse — few donors receive non-zero weights — and while some sparsity is natural, extreme concentration in a single donor makes the estimate fragile to that unit’s idiosyncratic shocks [Abadie, 2021]. A pool rated “good” on all three dimensions gives confidence the SC can produce a reliable counterfactual. GeoLift [Meta Incubator, 2022] implements a parallel composite diagnostic in the frequentist setting.

quality = design.donor_pool_quality()

quality.summary()

| metric | value | assessment | |

|---|---|---|---|

| 0 | Mean donor correlation | 0.635 | acceptable |

| 1 | Convex hull coverage | 100.0% | good |

| 2 | Effective number of donors | 10.11 | good |

| 3 | Overall quality | acceptable | acceptable |

Can the design recover a known effect?#

A dress rehearsal answers: if there were a real effect of a given size, would this design detect it? This approach is conceptually a placebo-in-time test — Abadie et al. [2010] introduced the idea of reassigning the intervention to a pre-period date and checking model behaviour (§4.2), and Abadie [2021] recommends it as a standard validation exercise (§7.2). The dress rehearsal extends this concept by injecting a known effect into the held-out window, rather than testing for the absence of an effect. This simulation-based validation is the standard approach in the geo-experiment design literature — GeoLift [Meta Incubator, 2022] uses the same pattern, while Cattaneo et al. [2021] develop a related simulation-based approach for constructing prediction intervals in the SC framework.

How the effect injection works. The procedure uses only the pre-period data (1970–1988), splitting it into two parts:

The first ~75% becomes the pseudo-pre-period — the data the SC model will train on.

The remaining ~25% becomes the pseudo-post-period — the window where we simulate an intervention.

In the pseudo-post window, a known effect is injected into the treated unit’s values. The effect_type parameter controls how:

"relative"(default): each treated value is multiplied by(1 + injected_effect). Soinjected_effect=0.15turns every treated observation \(y_t\) into \(1.15 \cdot y_t\) — a 15% uplift."absolute": the value is added directly, soinjected_effect=5.0adds 5 units to each observation.

The injected truth is the total cumulative signal that was added — the sum across all pseudo-post time points of the difference between the modified and original values. For relative effects, this equals sum(original_values) × injected_effect.

A fresh SC model is then fitted on the pseudo-pre data, with the modified pseudo-post data as the “post-intervention” period. The model has no knowledge of the injected effect — it must discover it from the gap between the treated unit and its synthetic control in the pseudo-post window.

The dress rehearsal passes when the 94% HDI of the posterior cumulative impact covers the injected truth — the model can both detect and correctly estimate the effect magnitude.

Important

The dress rehearsal and the power analysis use different success criteria. The dress rehearsal asks: does the posterior recover the true effect? (HDI covers the injected truth). The power analysis below asks: does the posterior detect that any effect exists? (HDI excludes zero). A design can detect an effect exists without precisely estimating its magnitude, and vice versa.

rehearsal = design.validate_design(

injected_effect=0.15,

holdout_periods=5,

)

fig, ax = rehearsal.plot()

rehearsal.summary()

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.196), 'Kansas' (r=-0.211), 'Louisiana' (r=-0.126), 'Minnesota' (r=-0.201), 'Mississippi' (r=-0.195), 'North Dakota' (r=-0.155), 'Ohio' (r=-0.575), 'Oklahoma' (r=-0.230), 'Pennsylvania' (r=-0.416), 'South Carolina' (r=-0.014), 'Texas' (r=-0.405)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 5 seconds.

There were 2 divergences after tuning. Increase `target_accept` or reparameterize.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

| injected_effect | effect_type | injected_truth | recovered_mean | recovered_hdi_lower | recovered_hdi_upper | hdi_covers_truth | bias | relative_bias | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.15 | relative | 74.235 | 33.024002 | 17.945903 | 49.978769 | False | -41.210998 | -0.555142 |

How to read this plot. The dress rehearsal plot shows the posterior cumulative causal impact over the pseudo-post period. The shaded band is the 94% HDI — the range of cumulative effects the model considers plausible. The dashed line marks the injected truth, the known cumulative effect we added to the data.

Pass (HDI covers injected truth): The model can both detect and correctly estimate an effect of this magnitude. The design has sufficient sensitivity for this effect size.

Fail (HDI misses injected truth): The model’s posterior is too narrow (overconfident) or systematically biased. This may indicate that the pre-period is too short, the donor pool is inadequate, or the effect size is too small relative to noise.

The printed summary reports the recovered posterior mean, the HDI bounds, and whether the interval covers the truth. If the dress rehearsal passes, proceed to the power analysis below to map out detection rates across a range of effect sizes.

What is the minimum detectable effect size?#

The dress rehearsal tests a single effect size. A power analysis extends this across a range of candidates to produce a Bayesian power curve — for each effect size, what fraction of simulations successfully detect the effect? Simulation-based power analysis is the industry standard for SC experiment design, pioneered by GeoLift [Meta Incubator, 2022] and supported by Athey and Imbens [2017], who note the importance of supplementary simulation exercises to strengthen the credibility of identification strategies.

How it works. For each candidate effect size, multiple simulations are run. Each simulation:

Adds random noise (drawn from the fitted model’s residual distribution) to the treated unit in the pre-period, creating a realistic “what if we ran this experiment again?” scenario.

Fits a fresh SC on the noisy pre-period data.

Runs a dress rehearsal with the candidate effect size — injecting the effect into the pseudo-post window as described above.

Evaluates whether the effect was detected: does the 94% HDI of the cumulative impact exclude zero?

Note the difference from the dress rehearsal criterion: the power analysis asks whether the model can tell that any effect exists (HDI excludes zero), not whether it recovers the exact magnitude (HDI covers truth). The detection rate across simulations gives the power at that effect size. The minimum detectable effect (MDE) is the smallest effect size where the power curve crosses the conventional 80% threshold.

Unlike frequentist power (which asks “does the p-value fall below 0.05?”), Bayesian power here asks: does the 94% HDI of the cumulative impact exclude zero? This posterior-based approach to detection is a natural advantage of the Bayesian framework: Brodersen et al. [2015] demonstrate how posterior predictive distributions quantify uncertainty in the counterfactual, and Kim et al. [2020] extend this specifically to synthetic control, arguing that the Bayesian framework provides “straightforward statistical inference” while avoiding the restrictive assumptions of frequentist SC inference.

Note

We use n_simulations=20 and lighter MCMC settings to keep runtime reasonable for documentation builds. In practice, more simulations (50–100) produce smoother power curves.

fast_kwargs = {

"chains": 4,

"draws": 500,

"tune": 500,

"progressbar": False,

"target_accept": 0.95,

"random_seed": 42,

}

power_curve = design.power_analysis(

effect_sizes=np.linspace(0.05, 0.25, 5),

n_simulations=10,

sample_kwargs=fast_kwargs,

random_seed=42,

)

Show code cell output

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.004), 'Kansas' (r=-0.020), 'Minnesota' (r=-0.180), 'Mississippi' (r=-0.041), 'Ohio' (r=-0.249), 'Oklahoma' (r=-0.034), 'Pennsylvania' (r=-0.108), 'Texas' (r=-0.131)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.001), 'Ohio' (r=-0.240), 'Pennsylvania' (r=-0.075), 'Texas' (r=-0.073)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.104), 'Ohio' (r=-0.238), 'Pennsylvania' (r=-0.120), 'Texas' (r=-0.101)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.041), 'Minnesota' (r=-0.086), 'Mississippi' (r=-0.157), 'Ohio' (r=-0.288), 'Oklahoma' (r=-0.067), 'Pennsylvania' (r=-0.184), 'South Carolina' (r=-0.015), 'Texas' (r=-0.173)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.115), 'Kansas' (r=-0.238), 'Louisiana' (r=-0.070), 'Minnesota' (r=-0.183), 'Mississippi' (r=-0.138), 'North Dakota' (r=-0.078), 'Ohio' (r=-0.460), 'Oklahoma' (r=-0.135), 'Pennsylvania' (r=-0.314), 'South Carolina' (r=-0.037), 'Texas' (r=-0.307)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.088), 'Ohio' (r=-0.261), 'Texas' (r=-0.003)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.035), 'Ohio' (r=-0.204), 'Pennsylvania' (r=-0.111), 'Texas' (r=-0.072)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.109)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.238), 'Minnesota' (r=-0.072), 'Mississippi' (r=-0.046), 'Ohio' (r=-0.499), 'Oklahoma' (r=-0.019), 'Pennsylvania' (r=-0.185), 'Texas' (r=-0.195)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.345), 'Kansas' (r=-0.127), 'Minnesota' (r=-0.115), 'Ohio' (r=-0.360), 'Oklahoma' (r=-0.012), 'Pennsylvania' (r=-0.220), 'Texas' (r=-0.181)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Mississippi' (r=-0.001), 'Ohio' (r=-0.263), 'Pennsylvania' (r=-0.050), 'Texas' (r=-0.027)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.074), 'Minnesota' (r=-0.040), 'Mississippi' (r=-0.164), 'North Dakota' (r=-0.003), 'Ohio' (r=-0.296), 'Oklahoma' (r=-0.099), 'Pennsylvania' (r=-0.151), 'South Carolina' (r=-0.060), 'Texas' (r=-0.162)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.020)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.052), 'Mississippi' (r=-0.024), 'Ohio' (r=-0.281), 'Pennsylvania' (r=-0.116), 'Texas' (r=-0.087)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

There was 1 divergence after tuning. Increase `target_accept` or reparameterize.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.051)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.113), 'Kansas' (r=-0.125), 'Louisiana' (r=-0.028), 'Minnesota' (r=-0.034), 'Mississippi' (r=-0.112), 'Ohio' (r=-0.468), 'Oklahoma' (r=-0.076), 'Pennsylvania' (r=-0.222), 'Texas' (r=-0.232)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.121), 'South Carolina' (r=-0.025)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.394), 'Louisiana' (r=-0.276), 'Minnesota' (r=-0.042), 'Mississippi' (r=-0.404), 'Missouri' (r=-0.122), 'North Dakota' (r=-0.300), 'Ohio' (r=-0.497), 'Oklahoma' (r=-0.352), 'Pennsylvania' (r=-0.441), 'Rhode Island' (r=-0.024), 'South Carolina' (r=-0.251), 'South Dakota' (r=-0.153), 'Texas' (r=-0.418), 'Vermont' (r=-0.206)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.064), 'Mississippi' (r=-0.057), 'Ohio' (r=-0.299), 'Pennsylvania' (r=-0.175), 'Texas' (r=-0.132)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.039)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.022), 'Kansas' (r=-0.177), 'Louisiana' (r=-0.021), 'Minnesota' (r=-0.074), 'Mississippi' (r=-0.137), 'North Dakota' (r=-0.036), 'Ohio' (r=-0.434), 'Oklahoma' (r=-0.141), 'Pennsylvania' (r=-0.311), 'South Carolina' (r=-0.012), 'Texas' (r=-0.271)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.025)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.142), 'Minnesota' (r=-0.019), 'Ohio' (r=-0.377), 'Pennsylvania' (r=-0.132), 'Texas' (r=-0.129)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.013)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.351), 'Louisiana' (r=-0.190), 'Minnesota' (r=-0.108), 'Mississippi' (r=-0.322), 'Missouri' (r=-0.035), 'North Dakota' (r=-0.249), 'Ohio' (r=-0.409), 'Oklahoma' (r=-0.282), 'Pennsylvania' (r=-0.401), 'Rhode Island' (r=-0.040), 'South Carolina' (r=-0.179), 'South Dakota' (r=-0.093), 'Texas' (r=-0.369), 'Vermont' (r=-0.094)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.071), 'Minnesota' (r=-0.040), 'Mississippi' (r=-0.059), 'Ohio' (r=-0.139), 'Pennsylvania' (r=-0.133), 'Texas' (r=-0.095)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.115), 'Mississippi' (r=-0.054), 'North Dakota' (r=-0.025), 'Ohio' (r=-0.250), 'Oklahoma' (r=-0.040), 'Pennsylvania' (r=-0.160), 'Texas' (r=-0.115)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.014)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.169), 'Louisiana' (r=-0.095), 'Mississippi' (r=-0.253), 'North Dakota' (r=-0.075), 'Ohio' (r=-0.403), 'Oklahoma' (r=-0.155), 'Pennsylvania' (r=-0.275), 'South Carolina' (r=-0.068), 'Texas' (r=-0.251)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.236), 'Pennsylvania' (r=-0.001)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.242), 'Kansas' (r=-0.318), 'Louisiana' (r=-0.199), 'Minnesota' (r=-0.286), 'Mississippi' (r=-0.233), 'Missouri' (r=-0.023), 'Nebraska' (r=-0.044), 'North Dakota' (r=-0.198), 'Ohio' (r=-0.632), 'Oklahoma' (r=-0.269), 'Pennsylvania' (r=-0.442), 'South Carolina' (r=-0.078), 'South Dakota' (r=-0.029), 'Texas' (r=-0.460), 'Vermont' (r=-0.010)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.260), 'Louisiana' (r=-0.075), 'Mississippi' (r=-0.171), 'North Dakota' (r=-0.126), 'Ohio' (r=-0.270), 'Oklahoma' (r=-0.134), 'Pennsylvania' (r=-0.230), 'South Carolina' (r=-0.060), 'Texas' (r=-0.228), 'Vermont' (r=-0.012)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 4 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.194), 'Minnesota' (r=-0.009), 'Ohio' (r=-0.331), 'Pennsylvania' (r=-0.095), 'Texas' (r=-0.101)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 4 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

There was 1 divergence after tuning. Increase `target_accept` or reparameterize.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.008), 'Kansas' (r=-0.163), 'Louisiana' (r=-0.084), 'Minnesota' (r=-0.210), 'Mississippi' (r=-0.239), 'Missouri' (r=-0.012), 'North Dakota' (r=-0.147), 'Ohio' (r=-0.321), 'Oklahoma' (r=-0.201), 'Pennsylvania' (r=-0.293), 'South Carolina' (r=-0.180), 'South Dakota' (r=-0.081), 'Texas' (r=-0.281), 'Vermont' (r=-0.063)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.063)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.054), 'Kansas' (r=-0.163), 'Louisiana' (r=-0.006), 'Mississippi' (r=-0.087), 'North Dakota' (r=-0.012), 'Ohio' (r=-0.456), 'Oklahoma' (r=-0.072), 'Pennsylvania' (r=-0.266), 'Texas' (r=-0.234)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.025), 'Mississippi' (r=-0.106), 'Ohio' (r=-0.269), 'Pennsylvania' (r=-0.148), 'Texas' (r=-0.089)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.122), 'Kansas' (r=-0.121), 'Louisiana' (r=-0.006), 'Minnesota' (r=-0.044), 'Mississippi' (r=-0.092), 'Ohio' (r=-0.407), 'Oklahoma' (r=-0.075), 'Pennsylvania' (r=-0.187), 'Texas' (r=-0.221)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.048)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.115), 'Kansas' (r=-0.264), 'Louisiana' (r=-0.135), 'Minnesota' (r=-0.077), 'Mississippi' (r=-0.202), 'North Dakota' (r=-0.133), 'Ohio' (r=-0.487), 'Oklahoma' (r=-0.213), 'Pennsylvania' (r=-0.350), 'South Carolina' (r=-0.094), 'South Dakota' (r=-0.039), 'Texas' (r=-0.327), 'Vermont' (r=-0.040)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.018)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.087), 'Iowa' (r=-0.076), 'Kansas' (r=-0.466), 'Kentucky' (r=-0.062), 'Louisiana' (r=-0.318), 'Minnesota' (r=-0.496), 'Mississippi' (r=-0.365), 'Missouri' (r=-0.168), 'Nebraska' (r=-0.213), 'North Dakota' (r=-0.356), 'Ohio' (r=-0.521), 'Oklahoma' (r=-0.403), 'Pennsylvania' (r=-0.488), 'Rhode Island' (r=-0.064), 'South Carolina' (r=-0.317), 'South Dakota' (r=-0.209), 'Texas' (r=-0.517), 'Vermont' (r=-0.227), 'Virginia' (r=-0.039)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.035)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.079), 'Kansas' (r=-0.123), 'Minnesota' (r=-0.038), 'Mississippi' (r=-0.045), 'Ohio' (r=-0.409), 'Oklahoma' (r=-0.027), 'Pennsylvania' (r=-0.239), 'Texas' (r=-0.219)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.040)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

There were 2 divergences after tuning. Increase `target_accept` or reparameterize.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Kansas' (r=-0.127), 'Louisiana' (r=-0.124), 'Minnesota' (r=-0.241), 'Mississippi' (r=-0.240), 'Missouri' (r=-0.032), 'North Dakota' (r=-0.122), 'Ohio' (r=-0.406), 'Oklahoma' (r=-0.181), 'Pennsylvania' (r=-0.229), 'South Carolina' (r=-0.139), 'South Dakota' (r=-0.041), 'Texas' (r=-0.283)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.047), 'Kansas' (r=-0.032), 'Ohio' (r=-0.197), 'Pennsylvania' (r=-0.065), 'Texas' (r=-0.053)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Ohio' (r=-0.078)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.025), 'Kansas' (r=-0.118), 'Louisiana' (r=-0.106), 'Minnesota' (r=-0.063), 'Mississippi' (r=-0.207), 'North Dakota' (r=-0.137), 'Ohio' (r=-0.472), 'Oklahoma' (r=-0.170), 'Pennsylvania' (r=-0.281), 'South Carolina' (r=-0.069), 'South Dakota' (r=-0.038), 'Texas' (r=-0.279)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

/Users/benjamv/git/CausalPy/causalpy/experiments/synthetic_control.py:129: UserWarning: Control units ['Connecticut' (r=-0.052), 'Minnesota' (r=-0.051), 'Mississippi' (r=-0.018), 'Ohio' (r=-0.306), 'Pennsylvania' (r=-0.088), 'Texas' (r=-0.097)] have pre-treatment correlation below 0.0 or undefined with treated unit 'California'. Consider excluding them from the donor pool. Use cp.plot_correlations() to inspect. See Abadie (2021) for guidance on donor pool selection.

self._check_donor_correlations()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 3 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, y_hat_sigma]

Sampling 4 chains for 500 tune and 500 draw iterations (2_000 + 2_000 draws total) took 4 seconds.

Sampling: [beta, y_hat, y_hat_sigma]

Sampling: [y_hat]

Sampling: [y_hat]

Sampling: [y_hat]